See 20 real examples of AI in education from schools using it for IEPs, lesson planning, grading, and tutoring. Organized by job, not by product name.

.avif)

Your teachers are already using AI. Most of them figured it out on their own, without any guidance from the district.

One is using five different tools to build a single book unit. Another has been drafting IEPs with ChatGPT for a year. A third switched tools twice this semester because the first one was too slow.

You don't need another article about whether AI belongs in schools.

Here are 20 real-world examples of AI in education, organized by the job they're using it for.

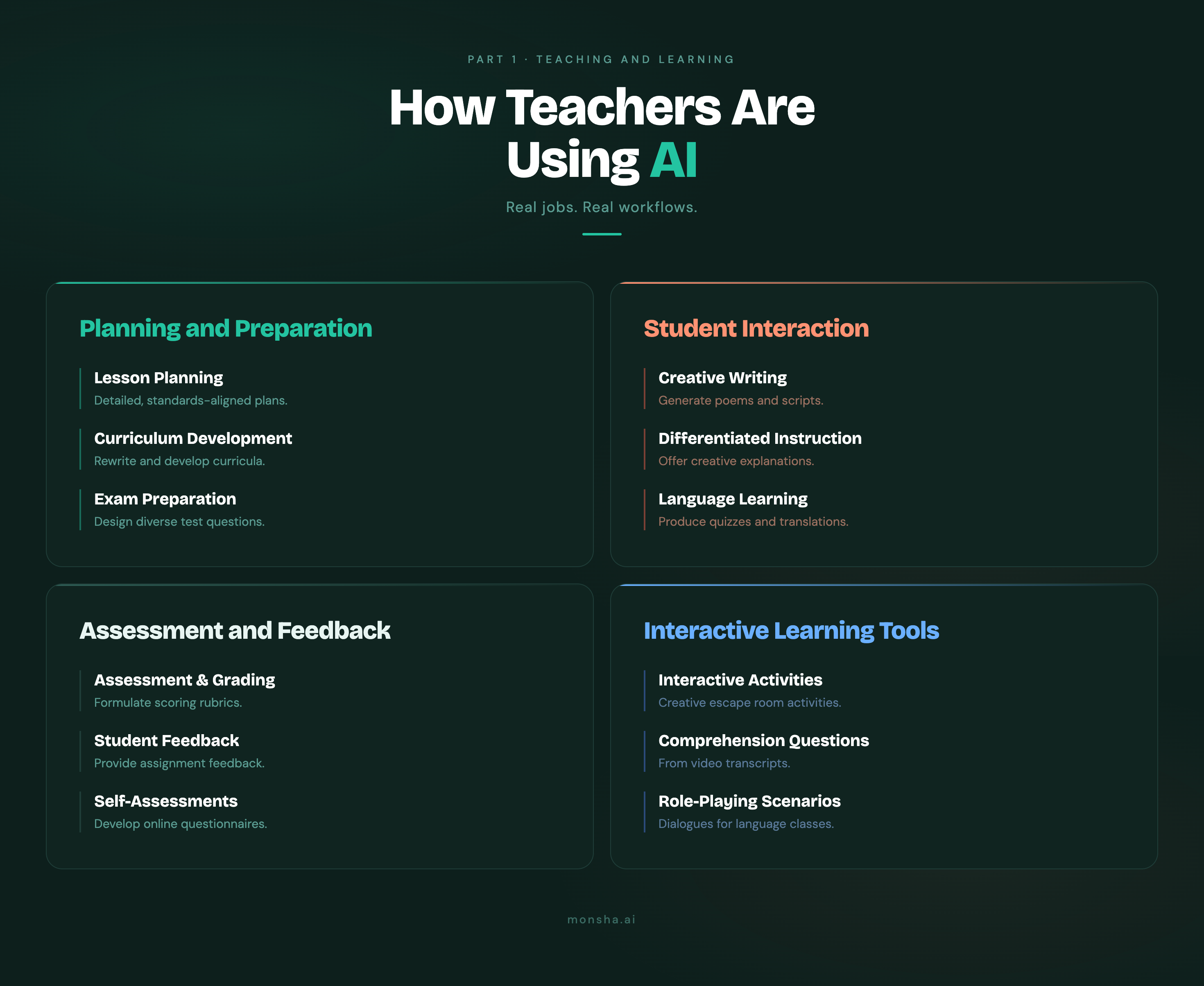

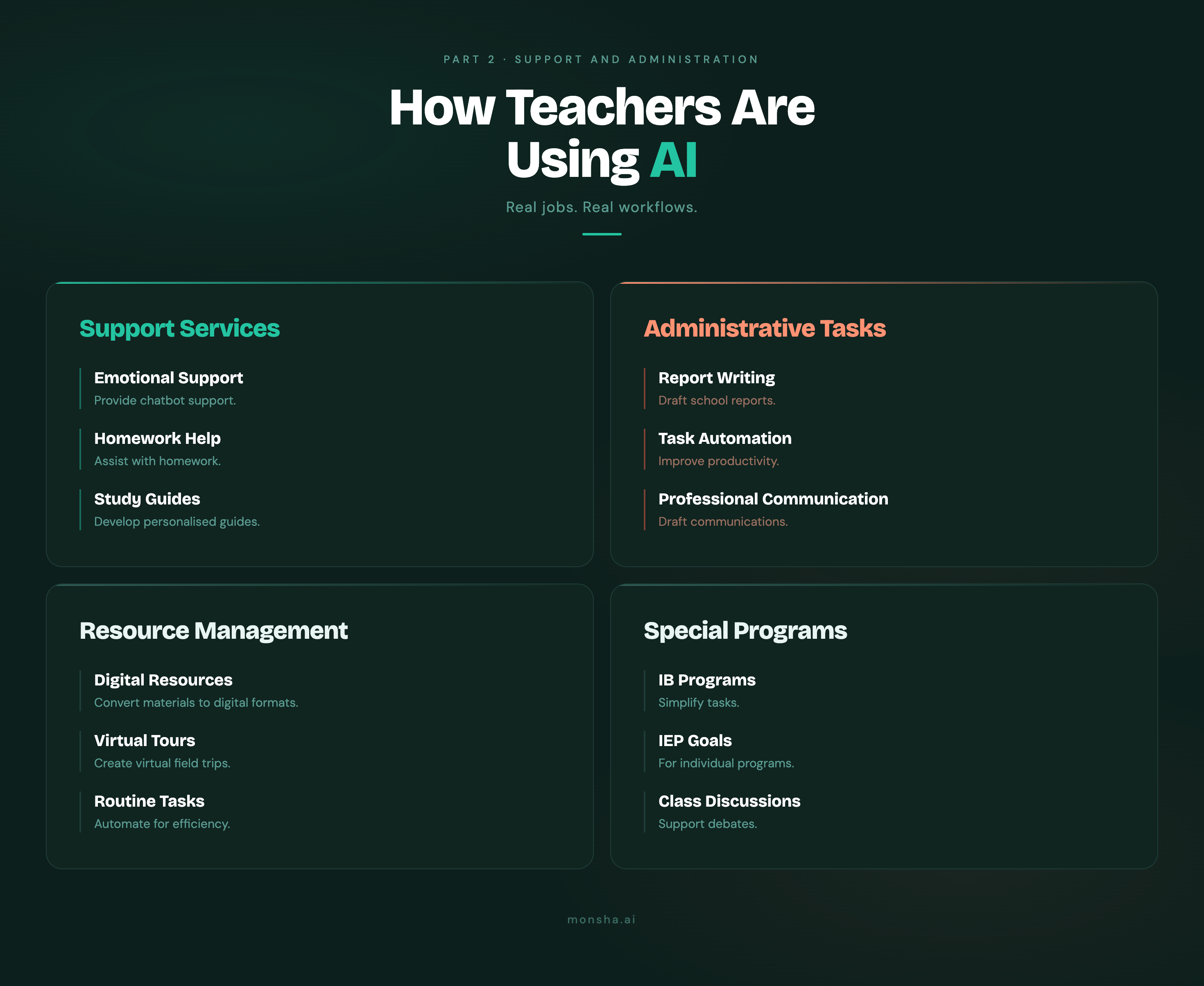

Schools are using AI for six primary jobs: writing IEPs, planning lessons, creating classroom resources, personalizing student practice, giving feedback, and identifying at-risk students early. The adoption is already widespread. Here is what it looks like in practice.

First, the scale.

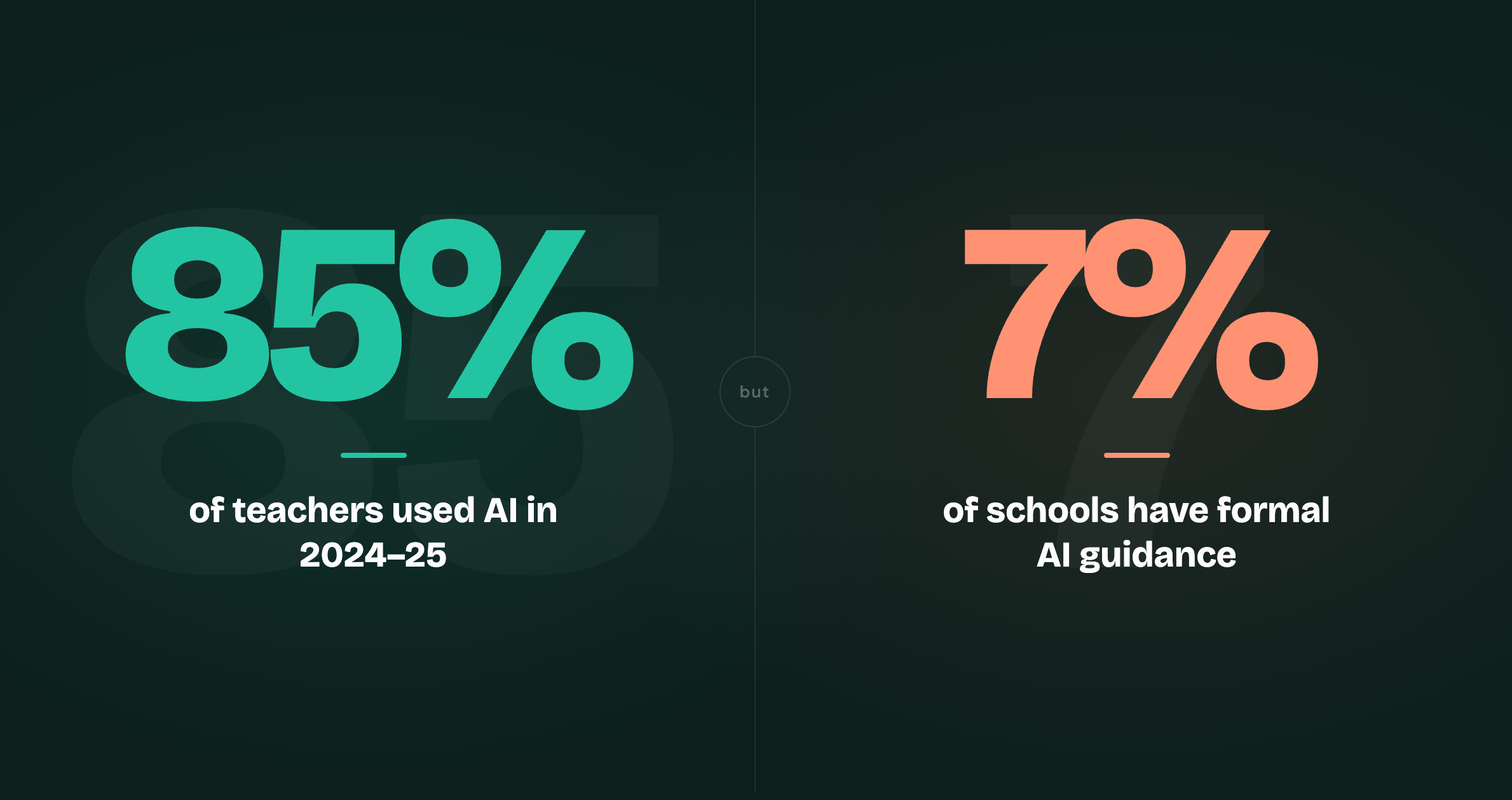

According to a CDT report, 85% of teachers and 86% of students used AI at least once during the 2024-25 school year. We're well past the pilot phase.

But the more telling number is what's happening below the district level. Programs.com found that 43% of teachers are buying AI tools with their own money (yes, their own money). None of this went through a procurement cycle. These are individual teachers picking up subscriptions on their own because they needed a problem solved last Tuesday. And only 7% of schools worldwide have any formal AI guidance in place to help them do it well.

So AI adoption in schools is already happening, and it's mostly uncoordinated. The people doing it didn't wait for permission.

(If you want to understand the broader benefits case first, this guide covers what the research says about AI and teacher workload. This article is about what that adoption actually looks like in practice.)

One teacher we spoke with was running five separate tools just to build a single book unit. He didn't plan it that way. He just found what worked for each job, one tool at a time. And that's the pattern across every example here: AI earns a foothold where it solves a specific, defined job. Use this tool for IEPs. This one for lesson planning. This one for differentiated worksheets.

The examples below are organized by that logic. By the job.

IEP writing is the job where AI went from experiment to habit fastest.

Seventy percent of US public schools reported special education teacher vacancies in 2023-24. The teachers who stayed often did so in spite of the paperwork, not because of it. A Teach Plus report found that former special educators in Illinois cited IEP paperwork as one of the main reasons they left. And researcher Harkins-Brown told GovTech what a lot of sped teachers already feel: "We've got a national crisis of vacancies of special education teachers. We have people not wanting to enter the field, and we have people who are already in it going, 'Gosh, this is a lot of paperwork, and I just wanted to teach.'"

Before AI, the workaround was goal banks, those drop-down menus of pre-written objectives. Faster than writing from scratch, sure, but the result was cookie-cutter IEPs that looked nearly identical for students with very different needs.

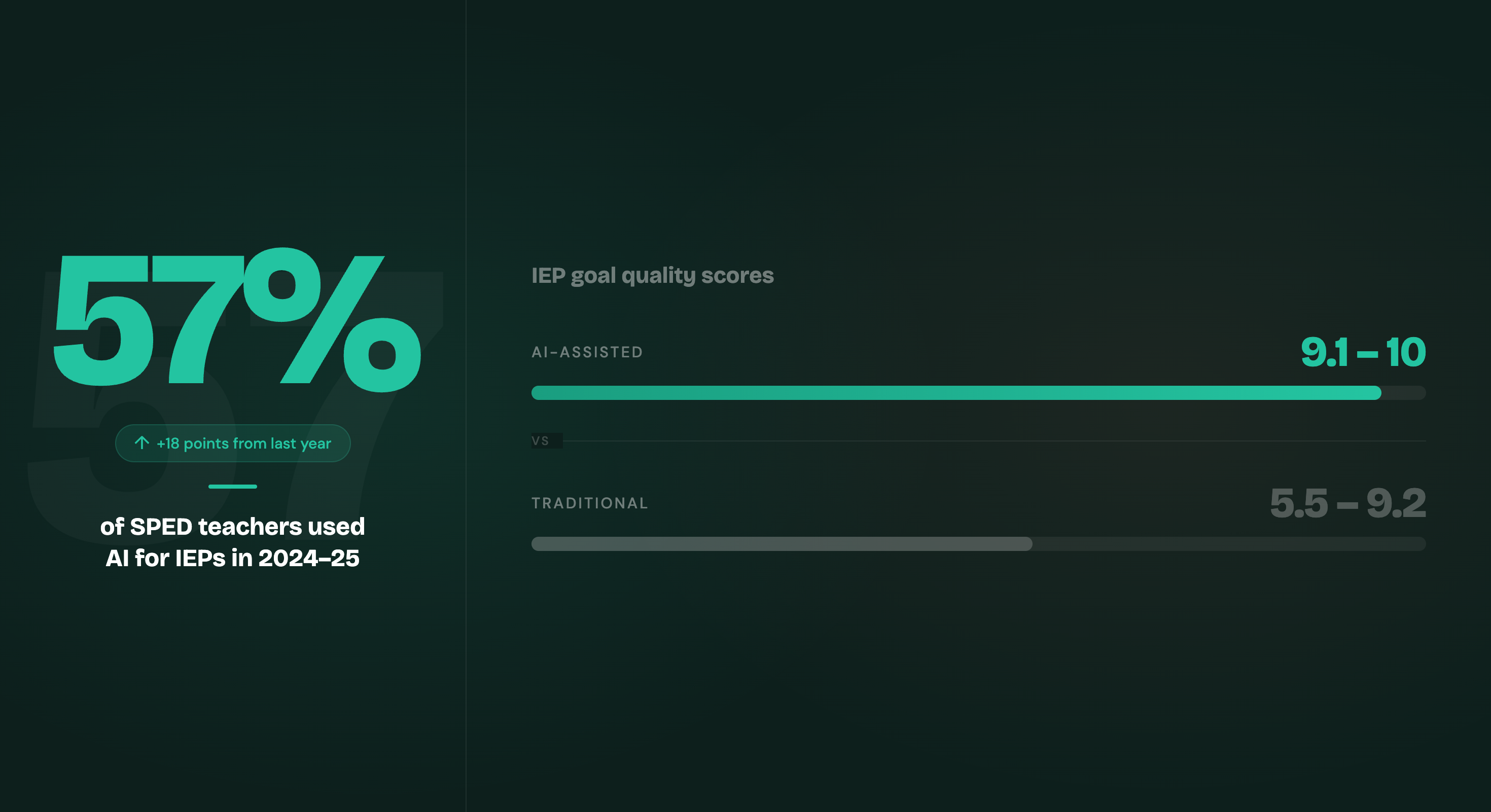

A preliminary study in the Journal of Autism and Developmental Disorders compared IEP goals written with and without ChatGPT for preschool students with autism. Goals drafted with AI assistance scored between 9.1 and 10 out of 10 on quality ratings. The control group, using traditional methods, scored between 5.5 and 9.2.

By the 2024-25 school year, 57% of licensed special education teachers were using AI to help develop IEPs or 504 plans, up 18 percentage points from the prior year, according to a CDT white paper. About a third used AI to identify patterns in student progress and guide goal-setting. Just 15% used it to write full plans without significant review.

There are real concerns here, and they're worth taking seriously. Perry A. Zirkel, a professor emeritus specializing in special education law, told Disability Scoop that AI tools "may lead to denial of meeting individual needs based on constraints of technology." Georgia is one of the few states to address this in formal guidance, specifically noting that AI shouldn't be used for high-stakes purposes like IEPs without meaningful human oversight.

The teachers getting the most from AI are the ones using it to get past the blank page. The judgment is still theirs.

At Lopez Island School District in Washington, special education teacher Rose Prust used Ablespace alongside AI tools to refine IEP goals for students with complex needs in early 2026. One finding stood out: Ablespace's interaction data revealed that a student with cortical visual impairment responded better to auditory cues than visual ones. You're not getting that kind of insight from a goal bank.

Dublin City Schools in Ohio had over 90% of their educators actively using an AI platform for IEP support work by spring 2025. The platform's IEP generator brought writing time down from two to three hours per student to about 30 to 45 minutes. One teacher described the moment her colleague quit mid-year, leaving her with dozens of IEPs due in two weeks. The AI generator, she said, was the difference between staying and walking out.

In Colorado, schools have adopted AI tools including Playground IEP for plan generation. Teachers enter a student's present levels of performance and get back multiple goal suggestions per area, plus accommodation recommendations grouped by instruction type, materials, environment, and assessment. It's the kind of structured starting point that goal banks were supposed to provide, but actually tailored to the individual student.

The pattern across every case is the same: AI produces a starting draft, and a teacher reviews, personalizes, and catches what the algorithm missed. The final document is still theirs. What's gone is the two hours of staring at a blank screen.

If IEP writing is taking your teachers' evenings, Monsha's IEP generator works on the same principle: structured first draft, teacher finalizes. For a comparison of IEP AI tools currently in use, this guide covers the top free options.

That same pattern shows up in lesson planning: AI produces the first draft, teacher decides what stays.

Teachers spend an average of five hours a week on lesson planning, according to Panorama Education. Fifty-eight percent say they'd benefit from more time. And those five hours are mostly coming from evenings.

The Education Endowment Foundation ran a randomised trial with 259 science teachers across 68 secondary schools in England. Half used ChatGPT alongside a structured guide on effective prompting and output review. Half didn't.

Teachers using ChatGPT spent 56.2 minutes per week on lesson and resource preparation. The control group spent 81.5 minutes. That's 25 minutes back per teacher per week, a 31% reduction in planning time.

An expert panel reviewed the lesson resources produced by both groups, without knowing which had been made using AI. Quality was not meaningfully different.

Saarrah Moosa, a Senior Leader and STEM lead at Frederick Bremer School in east London, was among the participants. One thing the trial's design made clear: access to ChatGPT alone wasn't enough. Teachers who saw real time savings were using it with a clear structure for prompting and reviewing what came back. Without that structure, you just get faster output that isn't necessarily better.

The largest coordinated AI training effort underway in the US is the AFT's National Academy for AI Instruction, a $23 million initiative launched in fall 2025 with Microsoft, OpenAI, Anthropic, and the United Federation of Teachers. The goal is 400,000 educators trained over five years, with the flagship campus co-hosted by the UFT in Manhattan.

AFT President Randi Weingarten's framing is worth paying attention to: "If we learn how to harness it, set commonsense guardrails and put teachers in the driver's seat, teaching and learning can be enhanced." That's the largest teachers union in the US investing in making sure its members are the ones leading AI adoption in classrooms.

In the UK, the Chiltern Learning Trust is leading a government-backed national programme, developing "Safe and Effective AI in Education" training modules with the Department for Education and the Chartered College of Teaching. Their target is 60% of UK schools with at least one certified staff member by Q4 2026. In Australia, 78% of secondary schools were actively using AI tools by mid-2025, with lesson planning the most common starting point.

One honest note from the research: a Microsoft study in Indiana found that AI use reduced time-to-complete-work by 40%, but students reported the work felt less like their own. So yes, the time savings are real, but how schools use that recovered time matters just as much.

For individual teachers building this into their weekly routine, Monsha's lesson plan generator works on the same principle the EEF trial demonstrated: a structured first draft you review and revise, rather than a finished document to hand to students unchanged.

Resource creation is where AI adoption gets messy. And honest.

Most of the examples in this article involve coordinated decisions: a school board approving a policy, a union partnering with a tech company, a district rolling out a platform with training attached. Resource creation doesn't work like that. Forty-three percent of teachers buy AI tools for this job with their own money, without any district IT review or rollout plan. It's a teacher on a Sunday night who needs a differentiated reading passage for three ability levels by Monday morning.

That's what Robert W., a high school teacher, described in a conversation with Monsha's support team. His workflow for a single book unit runs five separate tools in sequence: Gemini for the bulk of the text generation, DeepSeek as a second pass, NotebookLM to fact-check the homework and quizzes, Monsha for the presentations, Gamma to format the vocabulary lists and quiz sheets so they look presentable. "We don't have time to sit on Canva or whatever and design them all on our own," he said. He's right. But he's also spending real time switching between tools that could be doing the same job.

Some districts are moving toward a more structured version of what teachers like Robert are already doing on their own. Twin Rivers Unified School District in North Sacramento, California, deployed an AI platform across its roughly 25,000-student district after a pilot showed concrete time savings on differentiated materials. One teacher produced a full week of differentiated lessons in under an hour, a task that had previously taken several evenings. In Dublin, Ohio, Dublin City Schools teachers used an AI text-leveling tool to photograph book pages on their phones and produce side-by-side versions at different reading levels for struggling students. Ninety percent of Dublin's educators, including paraprofessionals and administrators, were actively using the platform by spring 2025.

The Yazoo County School District in Mississippi landed on a different tool for a different job. A teacher there didn't mince words: "I'm in love with your presentation resource. I haven't used any other AI platform that has this exact feature." She wasn't writing a testimonial. She'd found the thing that did the specific job she needed, and she kept coming back.

And then there's the honest counterpoint: these tools fail often enough to matter. The same conversations that surface that kind of enthusiasm also surface "this AI is so far off what I requested that I won't bother. I return to ChatGPT. Bye." from a teacher at Dallas ISD. The tools that earn continued use are the ones producing output a teacher can hand to students without spending another hour reformatting it.

If your teachers are losing time reformatting AI-generated materials, Monsha's worksheet generator and presentation maker are built to produce classroom-ready output from the start, not raw text that needs a second tool to make it usable.

Resource creation is mostly a teacher decision. A school can discourage it, permit it, or encourage it, but the tool is on the teacher's laptop and the call is largely theirs.

Student-facing AI is different. When a district puts AI in front of students, someone has to decide what that AI is allowed to do. And that decision, more than the tool itself, is what determines whether the rollout actually works.

Newark Public Schools has one of the clearest examples of what a considered rollout looks like. According to Khan Academy's case study, the district began piloting Khanmigo at First Avenue School in 2023, then expanded to 66 schools serving approximately 29,000 students. The design choice that mattered most wasn't the tool. It was the guardrails around it. Khanmigo can't give students direct answers. It uses a Socratic approach: guided questions, hints, dialogue rather than solutions. It's switched off entirely during quizzes and assessments. In a three-year longitudinal study of roughly 8,000 students in grades 3-8, students who became Yearly Proficient Learners on Khan Academy achieved an average of +6 points on New Jersey's state math assessment, compared to the state average of +2.

New South Wales, Australia, took the same philosophical position at a much larger scale. In Term 4 2025, the NSW Department of Education rolled out NSWEduChat to all public school students from Year 5 through Year 12. Rather than providing answers, the tool encourages critical thinking through guided questions. Student data is secured and content is filtered. In the trials before launch, students reported it helped them "understand their work better, develop their writing skills and break down complex tasks."

New York City reversed its ChatGPT ban and launched a full AI initiative, a signal that even districts that drew the hardest lines have concluded that keeping students away from AI is harder to defend than teaching them to use it thoughtfully.

Newark and NSW arrived at similar design decisions despite using different tools in very different contexts. Both were trying to avoid the same thing: AI that makes test scores look better in the short term without actually improving learning. The OECD's 2026 research shows exactly what that looks like when it goes wrong. We cover those findings in the "what didn't work" section below.

Both prioritized guided thinking over answer delivery, and both built unavailability into the design. The guardrail is really a position on what learning is supposed to look like, not just a restriction on a tool.

"Is it responsible to not teach it? We have to. We are preparing kids for their future," a Texas superintendent told EdWeek. The districts making deliberate guardrail decisions are the ones acting on that premise rather than deploying technology and hoping for the best.

If you're evaluating which tools fit which use case, the breakdown of the best AI tools for teachers covers what's currently being used at the school level.

The guardrail question doesn't only apply to student-facing AI. Teachers face a version of it too, every time they use AI to generate first-pass feedback on a piece of student writing.

The question is the same one Newark and NSW had to answer: what is the AI doing, and what are you doing?

In June 2025, the UK Department for Education formally cleared teachers to use AI for routine marking, with one condition: the teacher reviews the output and takes responsibility for what goes to students. And that condition is really the design principle that makes the whole thing function.

At Mustang High School in Oklahoma, 33 teachers ran a school-wide AI pilot. English teacher Colin Franklin had already been using AI tools for over a year before the pilot started. Teachers reported marking faster and providing more detailed feedback, not because the AI was doing the job for them, but because it handled the first pass on drafts. That freed them to spend their time on the work that actually required them.

That distinction is worth holding onto. First-pass feedback on a student's first draft is not the same thing as final judgment on assessed work. AI can process volume and flag common errors, but it can't notice that a student's writing sounds flat compared to last month, or that this particular piece came in three days late after a difficult week. That's still the teacher's job.

UK school leaders describe this as the "critical friend" model. One Deputy Head described the cultural shift in their school: it came from agreeing that "AI was a useful starting point or critical friend, not an endpoint." Once that framing was in place, staff felt less uneasy. The output was still the teacher's. The time cost was lower.

The objection worth sitting with comes from a high school English teacher writing in Edutopia: even "feedback-only" guardrails still leave students one click away from a full generative AI rewrite. The AI can give feedback, but what students actually do with it is a teaching question, not a technology one.

Seventy percent of teachers say they worry AI weakens students' critical thinking and research skills. Most of them are still using it. The answer they've arrived at looks a lot like what the best student-facing rollouts figured out: keep the human in the loop, make the AI's role explicit, and don't hand it the last word.

Every example so far has started with a teacher making a choice: open a tool, describe what they need, review the output. This one is different.

In four New Mexico school districts, Farmington, Raton, Carlsbad, and Hobbs, an AI platform called Edia now texts parents automatically the moment an absence is marked. The system asks for a reason. It accepts documentation. It routes absence patterns to staff who can act on trends rather than chasing individual phone calls. Parent response rates exceeded 60%. Traditional robocalls, which this replaced, got a fraction of that. Pilot schools saw roughly double the attendance improvement of non-pilot schools during the same period.

"Kids can't learn if they're not present," said Nathan Pierantoni, Farmington's executive director. "And we're talking about a district with 10,737 students." Superintendent Cody Diehl put the design principle plainly: "This is a tool, not a solution. If we see trends and patterns, we'll continue to do what we're supposed to support our kids."

And that distinction matters. The AI's job here is pattern detection at a scale no staff team can replicate manually: attendance data, behavior records, assignment completion, survey responses, all running together to flag a student who's slipping before the slip becomes visible in a report card or a counselor's caseload. At Laguna Beach Unified School District in California, Panorama's Solara platform surfaces early-warning indicators like attendance dips and missed assignments, so staff can respond faster than a traditional check-in cycle allows.

The research on these systems is still catching up with the rollout. A 2025 IES analysis found that machine-learning early warning models accurately predicted near-term risks, but weren't always more efficient than traditional methods. The real value turned out to be in the speed of the handoff from data to action, not in the prediction itself.

If your district hasn't looked at how attendance and academic data are being used together, this is a practical place to start.

Not every implementation story in this article ended cleanly. Some didn't end well at all.

In December, fourth graders at Delevan Drive Elementary School in Los Angeles were assigned to create a book cover for Pippi Longstocking using Adobe Express for Education, a tool installed on school-issued Chromebooks. When a student asked for "long stockings a red headed girl with braids sticking straight out," the tool returned sexualized imagery. Adobe fixed the issue within 24 hours. California pushed new safeguards. But the homework assignment had already gone home. (CalMatters covered the full story.)

That incident points to a specific gap: the difference between a tool being approved for classroom use and a tool being ready for the exact task a teacher assigns. The vendor responded fast. The policy process was slower. The students were in the middle.

The more consequential finding comes from OECD research in Türkiye. Around 1,000 high school students used GPT-4 across six sessions. A standard chatbot improved short-term performance by 48%. A tutoring-style version improved it by 127%. Then access was removed, and students who had used the general-purpose chatbot scored 17% worse than peers who had studied without AI at all. The short-term gains had masked a real problem: students were getting answers, not learning.

AI detection tools introduced a different kind of failure. Schools across the US began spending thousands on tools designed to catch AI-written student work. Vanderbilt University ultimately disabled Turnitin's AI detection entirely after concerns about false positives. A Stanford study found those same tools falsely flagged 61.2% of essays written by non-native English speakers, while performing near-perfectly on essays from US-born students. At Eleanor Roosevelt High School in Maryland, a student was flagged for writing about her own music taste. (NPR reported on the broader pattern.)

Then there's the quieter failure Edutopia documented: teachers using AI to prepare lessons while telling students not to. One student called it "hypocritical." And that's not a technology failure. It's a policy failure. Nobody in the building had decided what the rules were, or for whom.

Frederick Hess at AEI captured the pattern that sets schools up for all of these: "I've sat through dozens of pitch sessions and interviews with vendors promising their new tools would transform teacher work. (Narrator: They didn't.) This often left teachers cynical, frustrated that new portals, smartboards, and apps had ultimately been more hassle than help."

AI doesn't bypass that history automatically. What separates the examples that worked from the ones above is almost always the same thing: someone decided, in advance, what the tool was for and what it wasn't.

There is one question that keeps coming up in school conversations about AI. It's rarely teachers who bring it up.

No, AI is not replacing teachers, and teachers themselves are largely unworried about it.

Teachers themselves don't spend much time on this question. That should tell you something.

Across the research and teacher forums this article draws from, "Will AI replace me?" comes up and then gets dismissed in the same breath. The dominant feeling isn't existential dread. It's closer to impatience with the question. Teachers are busy. They don't have time for hypotheticals when there's a stack of IEPs due on Friday.

A Texas superintendent put the more useful question plainly in EdWeek: "Is it responsible to not teach it? We have to. We are preparing kids for their future."

That reframe matters. The real question is what happens to students if schools don't prepare them for a world where AI is everywhere. Teachers are the ones who make that preparation meaningful. No tool does it alone.

The AFT's National Academy for AI Instruction is the clearest institutional signal of where this is heading. The largest teachers union in the US is investing in making sure its members are the ones who know how to use these tools, and use them responsibly.

The examples in this article are full of teachers making judgment calls: reviewing AI-generated IEP goals before signing off, deciding which sections of a lesson plan to rewrite, recognizing when a student needs a conversation rather than another practice problem. All of that is still teaching. The job looks the same. It just takes less time in some places.

Looking across these 20 ai in education examples, the signal is consistent. AI works when the school has already answered two questions before the tool arrives: what specific job is this doing, and who is reviewing the output?

IEP drafts that teachers verify and sign. Lesson plans that teachers rewrite before delivering. Worksheets generated in seconds that still go through a teacher before reaching students. All of it is teachers reclaiming time on jobs that used to eat their evenings.

The schools in the "what didn't work" column share something: they added a tool without defining the job it was doing, or the guardrail that kept a teacher in the loop.

If you're making decisions about AI in your school right now, a clear policy is more useful than another tool. This guide on building an AI policy for schools walks through what that looks like in practice. And if your team is evaluating tools before committing, this free rubric walks through what to look for.

If your teachers are already using AI for planning, resource creation, or differentiation, Monsha is built specifically for those jobs. Several schools in this article are already using it for exactly that.

.png)

Monsha Co-Founder & CEO

Hi, I’m Piash - one of the people behind Monsha. I spend most of my time talking to teachers, learning how they work, and building tools to make that easier. Here, I write about practical ways AI can support your workflow, new features we’re building, and stories from real educators using Monsha.

Join thousands of educators who use Monsha to plan courses, design units, build lessons, and create classroom-ready materials faster. Monsha brings AI-powered curriculum planning and resource creation into a simple workflow for teachers and schools.

Get started for free