Explore how Google’s NotebookLM for teachers can transform teaching prep, student engagement, and collaborative education. Discover key features, benefits, use cases for educators, and a tutorial for getting started.

You may have heard NotebookLM is a tool that can save teachers a lot of prep time. A lot of us have tried it once. We made an audio overview of a textbook chapter and were amazed by the two AI hosts who sound like real podcast voices. Then we went back to writing lesson plans by hand because the rest of NotebookLM was not as easy to figure out.

The tool is great. It just tends to need a walk-through before you start saving time.

So here is the walk-through.

NotebookLM is a Google AI tool for reading and writing with your own materials. You upload your sources (PDFs, Google Docs, websites, YouTube videos, EPUBs, pasted text), and the chat answers questions only from those sources. Every answer carries an inline citation that links back to the passage it came from. Unlike ChatGPT or Gemini (here's how Gemini for Education compares), NotebookLM will not pull from the open internet, and it will not generate from training data when the sources run short.

That trade-off matters because of accuracy. A study from late 2025 tested NotebookLM, ChatGPT, and Gemini on a 300-document corpus and found NotebookLM made things up in about 13% of responses, against about 40% for the other two. So you still want to spot-check the output, but the gap is meaningful when something is heading toward students.

If the version of NotebookLM in your head is from early 2025, here's what's changed since then:

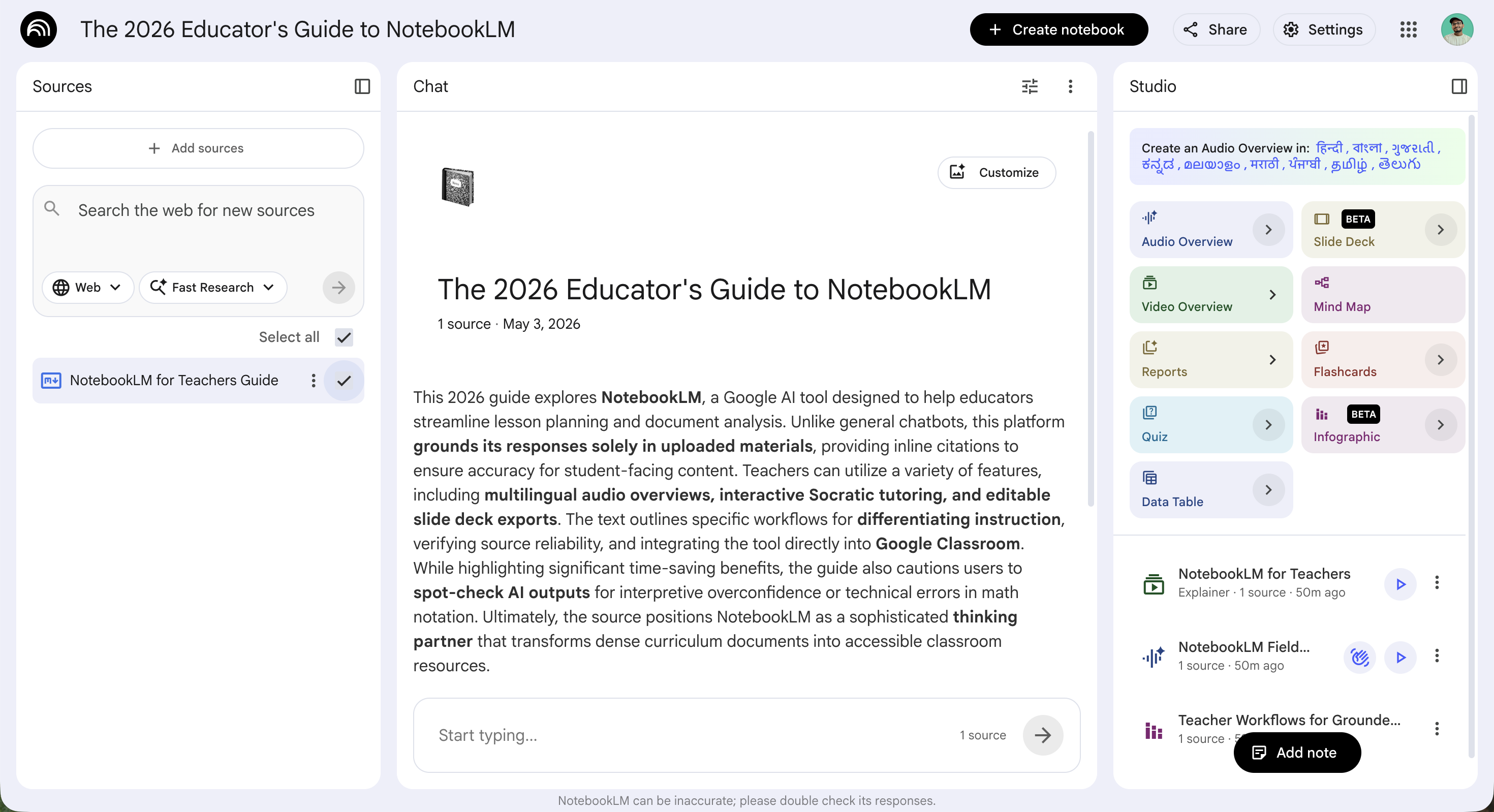

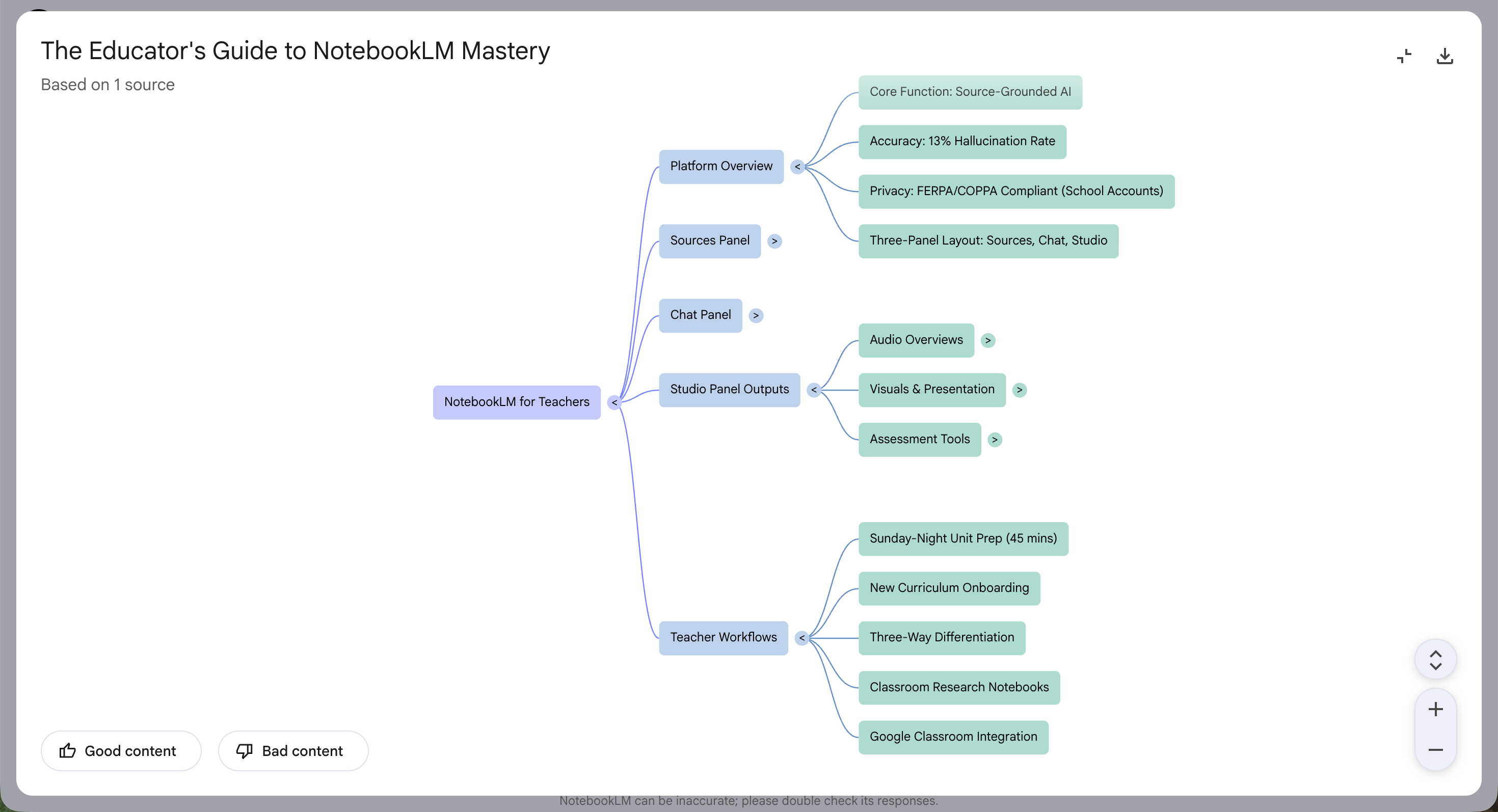

The working layout has three panels. Sources on the left holds your uploads. Chat in the middle is where you ask questions, with citations that click back to the source. Studio on the right is where everything else gets generated: the podcast with two AI hosts you can interrupt mid-conversation, the video overview, the mind map, the slide deck that exports to PowerPoint, the quiz with persistent progress.

So if you tried NotebookLM once last year and decided it wasn't for you, fair, but the screenshots in your head are out of date. Hopefully this guide gives you a reason to take another look.

If you'd rather listen to a podcast instead of reading through this long article, just hit play on the video below—the entire podcast was created using NotebookLM based on this Monsha article!

If you have a school Google account, you can open NotebookLM right now. Go to notebooklm.google, sign in, and click Create new. The technical work ends there.

The question that matters more is which account you sign in with, because that's what determines the data protections that apply to whatever you upload.

If your school is on Google Workspace for Education Fundamentals, Standard, or Plus, NotebookLM is included at no extra cost and is on by default. Google confirmed in August 2025 that NotebookLM is now a Core Workspace Service for all three editions, covered under the Education terms, which means FERPA, COPPA, and no model training on your data.

A lot of teacher blogs written before that announcement still tell you students under 18 can't use NotebookLM directly. That guidance is now out of date.

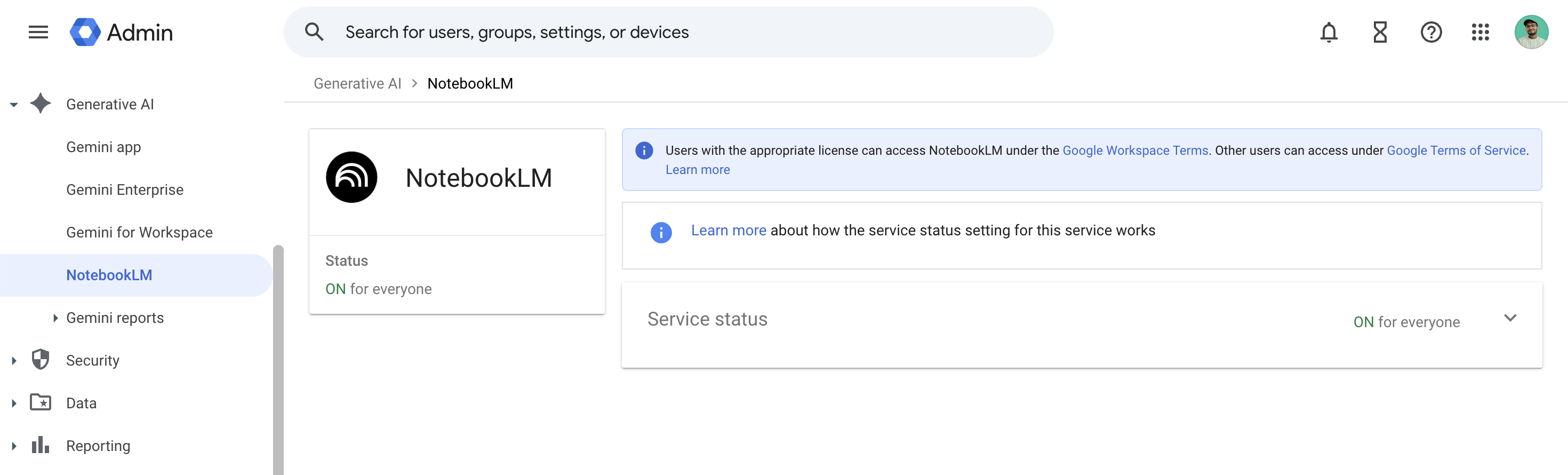

If you sign in and see a "service is off" message, your admin has switched NotebookLM off for your unit, which is the most common first-day issue I hear about from teachers. The fix is one ticket: ask your IT team to enable NotebookLM under Admin console → Generative AI → NotebookLM. As John Sowash at ChrmBook puts it, "Talk with your IT department if you do NOT see this feature."

A personal Gmail account also works for lesson planning with publicly available materials, but the data protections are weaker. So I would not put actual student work into a personal account. The decision really comes down to what you plan to upload.

The free tier holds 50 sources per notebook, 50 chat queries a day, and three audio generations a day, which in practice runs most teachers through a full unit a week without hitting any of them. The paid tiers (Google AI Plus, Pro, and Ultra) lift the caps for teachers using NotebookLM heavily across multiple courses, but that's a problem worth solving once you're using the tool, not before.

Once you're signed in and the service is on, here's what a working setup looks like:

That's a working notebook. From here, the thing that matters most is what you put into the Sources panel, which is what the next section walks through.

Prefer video instead? Here's a relevant tutorial showing how teachers can upload PDFs, Google Docs, text, or URLs into NotebookLM and turn them into clear lesson plans, comprehension questions, vocabulary lists, simplified explanations and more. NotebookLM also generates podcasts from your readings so you can study while commuting or doing your errands.

Every Studio output in NotebookLM is only as good as what's in the Sources panel. Most of the friction teachers run into with NotebookLM, when you trace it back, starts here. And most of that friction is preventable, if you know what to check before you generate anything.

Per Google's documentation, NotebookLM accepts PDFs, Google Docs, Google Slides, Word documents, plain text, Markdown, CSV, PowerPoint, websites, YouTube links, pasted text, EPUB (added in March 2026), and audio files. Word documents work directly now, so you can ignore the older guidance from teacher blogs that still tells you to convert them to PDF first.

What fails, mostly silently:

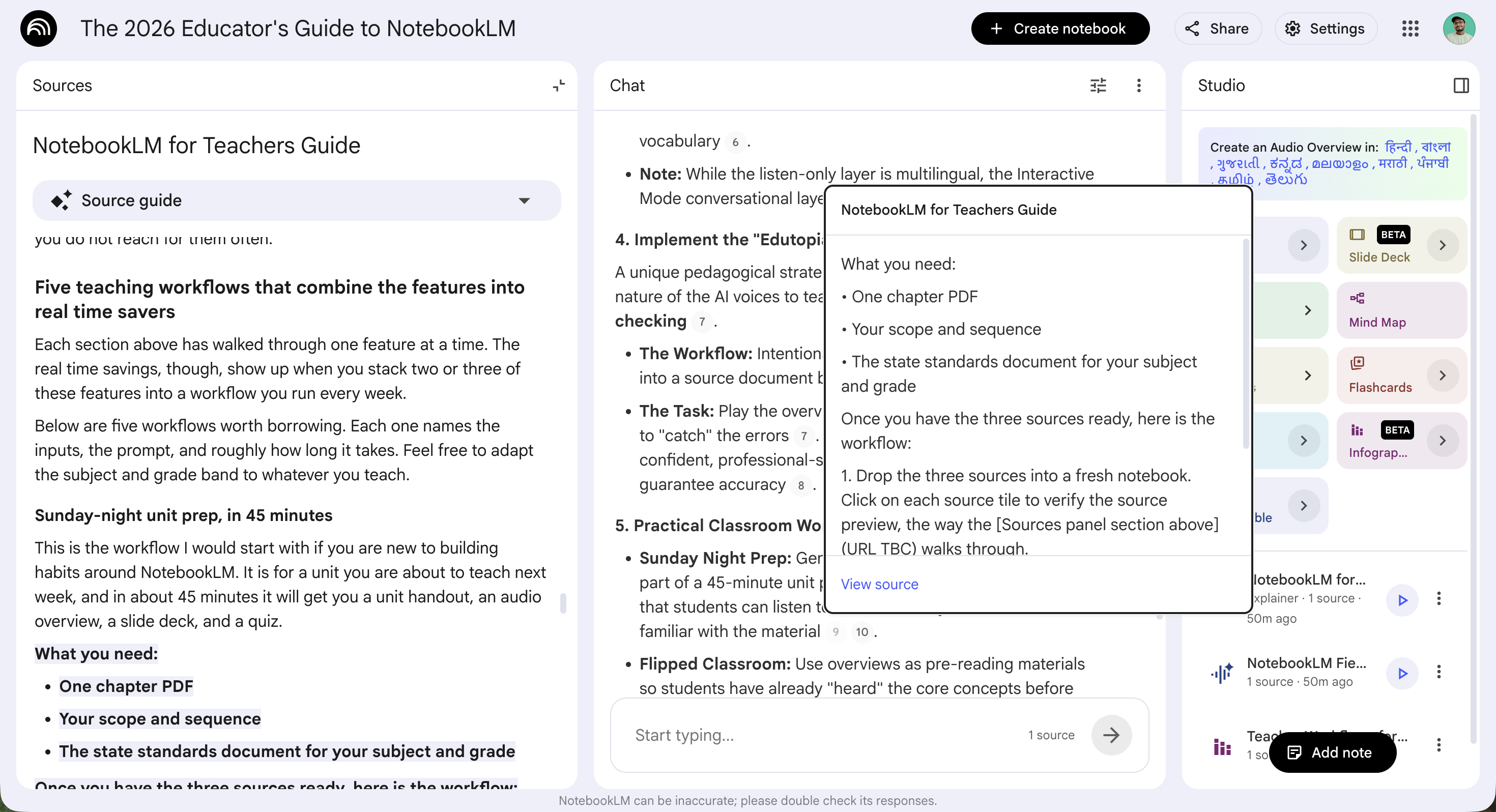

The verification move is simple, and it has two layers.

The first is the source preview. Click the source tile, and the right-hand pane shows a preview of the extracted content. If the preview is empty, or shows only the URL and a metadata stub, the upload failed. The tile is a placeholder for content the chat can't actually see. Re-add the file, convert it to a different format, or replace the source.

The second layer is for cases where the preview looks fine but you want a stronger signal. Ask NotebookLM directly what it sees. The prompt is something like "Summarise the contents of [filename] in three sentences." If the answer is generic, vague, or hedged, the source did not load the way you needed it to. If it's specific and citation-grounded, you're fine.

Fair warning: this sounds like an extra step, and it is. But it costs about ten seconds, and it prevents the failure mode where you generate a 12-minute audio overview, send it to a class, and find out later it was built on three of the seven sources you uploaded, because the other four loaded as metadata only.

The free tier holds 50 sources per notebook, and most teachers don't get close. The working number is closer to 10, which is what Educators Technology settled on. The reason is the same in both places: the more sources you stack into one notebook, the less data NotebookLM pulls from each one.

A teacher in the r/notebooklm limitations thread put it directly: "the more sources you put in it, the less data it will fetch from each." A separate r/edtech audit confirmed it from the other side, with a 220-page image PDF that loaded as a tile but had only pages 97 to 149 actually come through to the chat.

So the working version is one notebook per unit, eight to twelve sources, verified individually before you generate anything. Run that, and NotebookLM stops surprising you in front of a class.

Most of us start with a PDF, type "summarize this," and get a serviceable paragraph back. That's the most common first use of NotebookLM's chat, and it's why some teachers decide NotebookLM isn't worth the trouble. The chat is much more capable than that. It carries citation grounding, Learning Mode, custom personas, and a small library of prompt patterns that turn it from a search interface into a thinking partner. But the difference shows up only when you give it a specific source, a grade band, and a constraint.

This section assumes you've followed the verify-before-generate move from the Sources panel. If your sources didn't load properly, no prompt is going to save you.

Every chat response carries inline numbered citations. Click one and the source preview opens to the exact passage the answer came from. Tech & Learning calls this "interrogating a text," and it's the single biggest under-used move in the chat panel. The temptation is to read the answer, accept the citation as a receipt, and keep moving.

Now there's a catch. The citation only confirms the source said something, not that NotebookLM interpreted what it said correctly. A paper from October 2025 calls this gap "interpretive overconfidence": NotebookLM's outputs compress rather than comprehend a text, and sometimes add a tone of certainty or a sense of importance the original author wouldn't recognise.

So the verify-by-hand move is:

You can skip the click on summary work and routine restatement. Run it on anything going in front of a class.

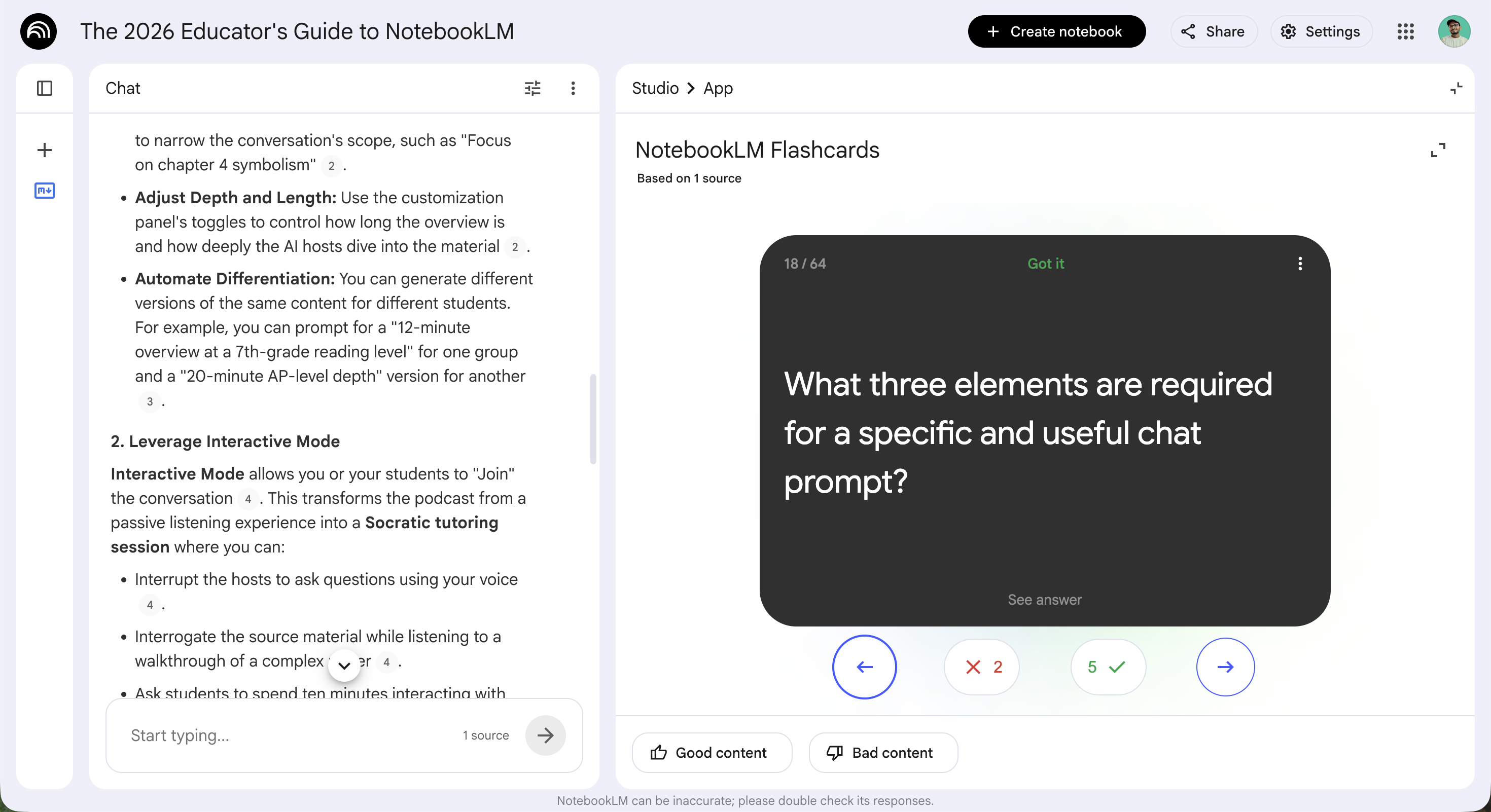

Learning Mode shipped in late 2025 and tends to get bypassed on first pass because the name sounds like marketing. The mechanic matters more than the name:

For most lesson prep, Default is the right setting. Use Learning Mode when you're sitting with a paper before assigning it, walking yourself through it the way you'd want students to. A teacher in r/notebooklm extended the move into a class workflow: assign students a notebook with two or three sources, ask them to spend ten minutes in Learning Mode, and the chat does the slow reading the lesson would otherwise have to.

Set the persona once at the notebook level. Every subsequent answer in that notebook comes back already framed for that context. No more rewriting "as a 7th grade Earth Science teacher aligned to NGSS, please rewrite this passage at a 4th grade reading level" at the top of every prompt.

Two examples to borrow:

Respond as a curriculum designer aligned to NGSS for grade 7 Earth Science. When asked to rewrite a passage, default to a 4th grade reading level unless told otherwise.

Respond as a coach for an 11th grade student preparing for AP US History. Push the student toward evaluate-tier analysis. Cite the page each claim is grounded in.

The chat will hold either frame for the entire notebook session, which means you stop wasting the first half of every prompt re-establishing context the chat already had.

NotebookLM is forgiving. "Summarize this paper" will reliably get you a paragraph that lands generally on topic. But teacher prep usually doesn't need passable, it needs the kind of output you can drop into a lesson plan or hand to a student. Each prompt below names a source, a grade band, and a constraint, which is what the chat needs to land somewhere useful.

1. Differentiate.

Take the lesson on [topic] from [source X] and produce three versions for students at three reading levels: a 4th grade scaffolded version, a grade-level version, and an extension version for students who finished early.

Leah Cleary names a version of this as her weekly move.

2. Scaffold.

For [source X], generate a sequence of questions a student can use to work through this independently, starting with "what does this paragraph say" and building to "what does this paragraph imply."

3. Three Bloom's levels.

Generate three questions on [topic from source X] at each of these Bloom's levels: remember, apply, evaluate. Cite the page each question is grounded in.

4. Find gaps.

Across the sources I've uploaded, identify three claims I'm about to teach where the sources disagree or contradict each other.

This one is a check on your own preparation, and it's the prompt that surprises teachers most often.

5. Simplify for grade level.

Rewrite [paragraph from source X] at a 4th grade reading level. Preserve all content. Cut any vocabulary above the grade band or define it inline.

Anita Samuel uses a close version of this in her substack.

The pattern across all five is the same: source, grade band, constraint. Skip any of those three and the chat gives you a generic paragraph. Include them and the output gets specific enough to actually use. Hopefully one of these prompts gives you a way to use the chat as a thinking partner this week.

A history teacher in the OER Project Teacher's Lounge described her first audio overview this way:

"Totally amazing and totally terrifying. The laughs are even placed in the places someone would laugh."

That reaction shows up in nearly every first-encounter post about NotebookLM. The teachers who come back to it are the ones who learn what to do with the audio after the wow wears off, and the 2026 controls are what get you there.

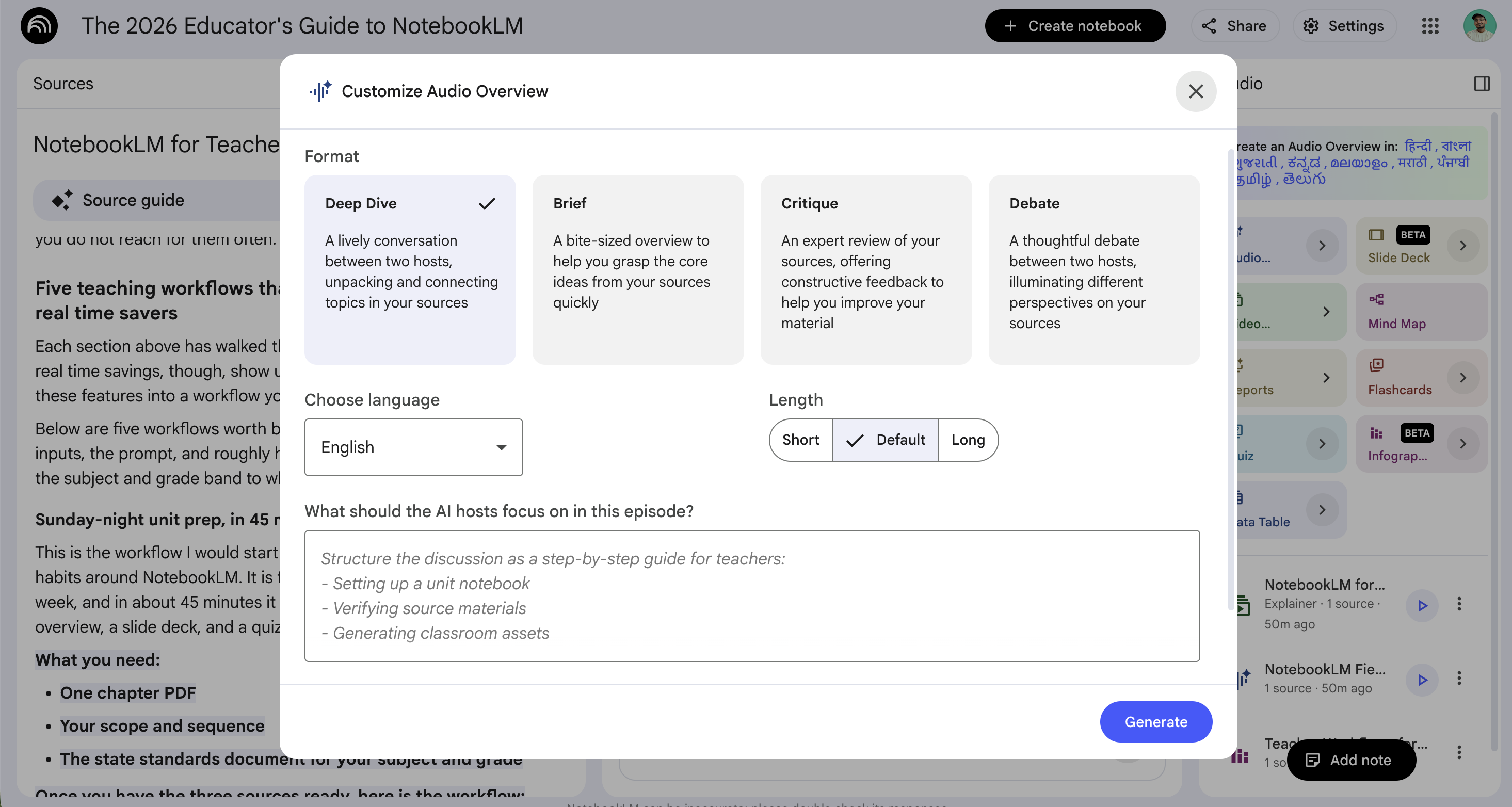

The default audio overview is fine for a one-off. It's also why the first generation often doesn't get used in class. The audio lives in the Studio panel; click Generate and NotebookLM produces an eight-to-fifteen-minute multi-host conversation drawn from your sources. At default it lands generically, the same way "summarise this" lands generically in the chat.

The fix is the focus prompt:

The customisation panel also exposes a length toggle and a depth control alongside the focus prompt. Both are worth pushing on if the first version still feels generic, before you regenerate from scratch.

Here's a great pro tip John Sowash at ChrmBook names. Same source set, two slightly different prompts, two different versions:

Generate a 12-minute audio overview at a 7th grade reading level, avoiding terms above grade band unless defined inline.

Generate a 20-minute audio overview at AP-level depth, including evaluate-tier discussion of where sources 2 and 5 disagree.

That's differentiation, automated. Run both prompts against the same notebook and you have a middle-school version and an AP version of the same content, each pitched at the level its student needs.

Interactive Mode is what makes 2026 NotebookLM a different product from the version teachers tried in 2024. After the audio generates, a Join button appears alongside the play controls. Hit Join, the hosts pause, you ask a question with your voice, and the hosts answer from your sources before picking up where the original overview left off.

What Interactive Mode is good for in class:

Where it falls short:

By March 2026, audio overviews ship in 80+ languages. For ELL teachers, this is the feature that justifies the whole tool. The output in each language is full-length, with the structure and depth of the English version.

Here's the classroom workflow:

The Interactive Mode caveat applies, though. The conversational layer is English-only. The listen-only layer is multilingual.

Edutopia's NotebookLM podcast video names a pedagogical move worth borrowing. The move works because the audio is persuasive. The hosts sound competent, the laughs land where someone would laugh, and a confident host with a podcast voice isn't the kind of source students naturally push back against. That's exactly the lever.

Here's the move:

The listening becomes close reading. The comprehension check is embedded in the task itself, and students get the lived experience of why "it sounded confident" isn't the same as "it was right." It's the same lesson the interpretive-overconfidence research from October 2025 makes for the chat panel, just delivered through audio.

Video overviews shipped in two flavours in 2026: standard and cinematic. They are not the same use case. The cinematic version is the one that gets the demos. The standard version is the one teachers will actually deploy.

The cinematic version is the one that gets the press. Google announced Cinematic Video Overviews on 4 March 2026, built on Gemini 3, Nano Banana Pro, and Veo 3, with Gemini acting as a creative director that builds fluid animations and visual storytelling from your sources. It's impressive on first watch. It is also gated. Cinematic requires Google AI Ultra at $250 a month, 18+ only, English-only, capped at 20 generations a day, and not editable after generation. If the structure misses, you regenerate from scratch and burn another against the cap.

Most teachers I know aren't on Ultra. So even if cinematic is the better demo, it's not the thing you build a Sunday-night habit around.

The standard version is the working one. It runs on the free tier with limits, and it ships with two formats worth knowing. The Explainer connects the dots across your sources in a structured walkthrough. The Brief is a two-to-three-minute version that lands one core idea. Underneath both, the standard output is a narrated slideshow, not animated cinema. That's the right object for the use cases Educators Technology names: flipped-classroom pre-reading where students arrive having already heard the material, parent meetings where you need a quick walk-through of the unit you're about to start, and student feedback delivered as a short narrated tour of an annotated rubric.

Here's how the two formats compare side by side:

A fair warning on engagement, though. A year-5 teacher in the r/notebooklm game-changer thread put it well: "I do like the video feature too however I find it's less engaging for students and can become a little robotic, but the potential is definitely there!" The standard videos sound competent, the same way the audio overviews do, and the same caveat applies. Persuasive narration is not the same as accurate narration. If you're going to play one in front of students, watch it once first, and use the interpretive-overconfidence research as a check on yourself the way you would for the audio.

So skip cinematic unless you have Ultra and a one-off asset to produce. Use standard, in Brief format, for anything you need to deploy this week.

Here's a video on NotebookLM for Teachers, to show what the standard video overview format looks like in practice. This one was generated by NotebookLM from the sources behind this guide.

Google's March 2025 launch post for mind maps led with a biology example: drop research papers about Coral Reef Ecosystem Decline into NotebookLM and you get back a branching diagram with nodes for Ocean Acidification, Rising Sea Temperatures, Pollution, and Overfishing. The teaching examples weren't in the launch post.

What the launch post doesn't say is that every node is wired into the chat. Click a sub-topic and NotebookLM fires that topic into the chat panel as a question, with the same source citations the chat would return if you typed it yourself. The mind map isn't really a deliverable. It's a navigation interface to your sources.

Three uses earn the click in a teacher's week:

A few honest limits to know. The map structure is one interpretation, not a definitive index. A primary source's main argument can sit inside a sub-branch the model treated as secondary, especially in dense humanities sources where the thesis is paragraph nine, not paragraph one. Cross-check by clicking the node and reading what the chat returns from the sources. The map is also not editable after generation, and the only download is a static PNG. If a branch is wrong, you regenerate from scratch, and the new map will look slightly different. There's also no cross-notebook view, which is the same cross-notebook isolation XDA names elsewhere in the product.

The mind map is the read-only Studio output, which is great for navigation. The next section covers the editable Studio output, which is the one that changes Sunday-night prep.

NotebookLM can now generate a slide deck from your sources, let you edit individual slides with plain-language feedback, and export the whole thing as a PowerPoint (.pptx) file. The flow lives in the Studio panel on the right. As of the March 2026 update, all of it is on the free tier.

Open your notebook, look for the Slide deck card in the Studio panel, and click Generate. The default deck is fine for a quick demo, but it's not the deck you want to use in class. The prompt is what makes the difference.

Here's a prompt I like for any unit deck:

Generate a 12-slide deck for an AP Biology unit on cellular respiration. Include one slide per stage (glycolysis, link reaction, Krebs cycle, electron transport chain), an opening hook slide, a misconceptions slide, and three slides of practice questions at evaluate-tier Bloom's. Cite the source page each claim is grounded in.

Feel free to adapt the grade level, subject, and structure to whatever you're teaching. The deck takes about 60 to 90 seconds to generate.

Now this is the feature that changed in March 2026. Click any slide and submit feedback in plain language. Here are some examples:

The slide regenerates in place, and the rest of the deck stays where it is. So if four out of twelve slides need work, you can fix those four without throwing out the eight that are already good.

Click the three-dots menu on the slide deck card and select Download PowerPoint (.pptx). The file downloads right away.

A few places to take the file next:

One thing to watch for: the exported PPTX uses AI-generated layouts, so it may not be a perfect copy of what you saw on screen. Some elements export as images instead of editable text boxes. It's worth opening the imported deck once and looking it over before you teach with it.

If you teach math, science, or any subject with notation, there's a real limit to be aware of. A teacher in the r/notebooklm Stats thread shared a great example: "There's some issues. For example, the arrows on the first slide are wrong." Embedded math in PDFs gets mangled when NotebookLM uploads it, and the slide deck inherits the problem.

Here's the workaround. Instead of uploading the math PDF, paste the LaTeX directly into the chat as a source. The chat reads LaTeX cleanly, the slide deck pulls from the chat's source set, and the notation comes through correctly.

It's an extra step, but for a deck that depends on equations rendering properly, this is the way to get a clean export. Run the LaTeX-as-source workaround once and the math comes through without the back-and-forth of regenerating the deck three times.

If your students study from generated questions or flashcards, the Studio panel has both. As of the 2026 Studio update, the flashcards remember your Got it / Missed it sort between sessions, which is what turns them from a one-off demo into a real study tool. The one catch is the export gap. There is no native way to get a quiz or a flashcard deck out of NotebookLM and into a Google Form, a printable handout, or another tool, so a workaround is part of the workflow. Here's how to make the most of both.

Click Generate on the Quiz card and NotebookLM pulls a multi-question set from your sources, with hints, distractors, and answer explanations. The default quiz is fine for self-study, and it's a great starting point. For something you would actually put in front of a class, the prompt does the work:

Generate a 12-question quiz on cellular respiration at 9th-grade level. Mix six recall, four interpret, and two evaluate-tier questions. Each question should cite the source passage. Avoid distractors that turn on a single word.

Feel free to swap the topic, the grade level, and the Bloom's mix to whatever you are teaching.

A few things worth deleting from any generated set:

The Flashcards card generates a deck from your sources, and as of the 2026 update the deck remembers your Got it / Missed it sort the next time you open the notebook. So a student who works through 40 cards on Monday and comes back on Wednesday picks up at the cards they missed, not the ones they already knew. That is what turns the flashcards from a quick demo into a real study tool. Feel free to assign a notebook with a target deck, set a number of cards per week, and let the persistent sort do the spaced-repetition work in the background.

Here's the catch with both of these. There's no native export. A middle-school teacher said it well in a r/notebooklm post: "I can't export the quizzes that it makes, nor is there an easy way to export the flashcards."

So here's what teachers do instead:

One thing to watch for: the chat-to-text move sometimes drops a citation or rewords a question slightly. It's worth comparing the plain-text version against what's on screen before you publish.

A teacher in the r/Teachers AI trust thread said it well: "AI is great at content transformation, but weaker at pedagogically sound assessment." That has been my experience too. The AI-generated questions tend to work well for recall and identification, and they tend to be weaker on evaluate-tier and synthesis questions, where the rubric depends on what the standard is actually asking for.

In my opinion, the working rule is to use AI-generated questions for low-stakes formative work, like exit tickets, study cards, or do-now starters. For anything graded, I would regenerate from scratch, or treat the AI version as a first draft and edit it carefully. The teachers I have talked to who get the most out of NotebookLM treat the Quiz card as a question generator they curate, rather than a finished quiz they ship.

So generate the quizzes and flashcards, use them as study tools inside the notebook, and screenshot what you need to keep. The export gap is the price of working inside NotebookLM right now, and the workarounds above are the working ones. I would love to hear what you do for export if you have found a workaround that beats the screenshot.

Three more Studio outputs worth knowing: Reports, Infographic, and Data Tables. Each has a narrow use case, and one is paid-only, so it helps to know before you click.

The Reports card is the most flexible Studio output. Default formats include Briefing Doc, Study Guide, FAQ, and Timeline. As of the March 2026 update, there's a Blog Post format too. There's also a custom option where you describe the structure, style, and tone you want, and NotebookLM tries to follow it.

The 2026 redesign also added dynamic suggestions. NotebookLM reads your sources and proposes formats that fit the content, so you might see a Glossary of Key Terms suggestion alongside the standard four. The Briefing Doc tends to be the one teachers reach for first. It's a tight summary of the upload that doubles as a sub-plan, a parent-night handout, or the unit-overview doc you keep meaning to write.

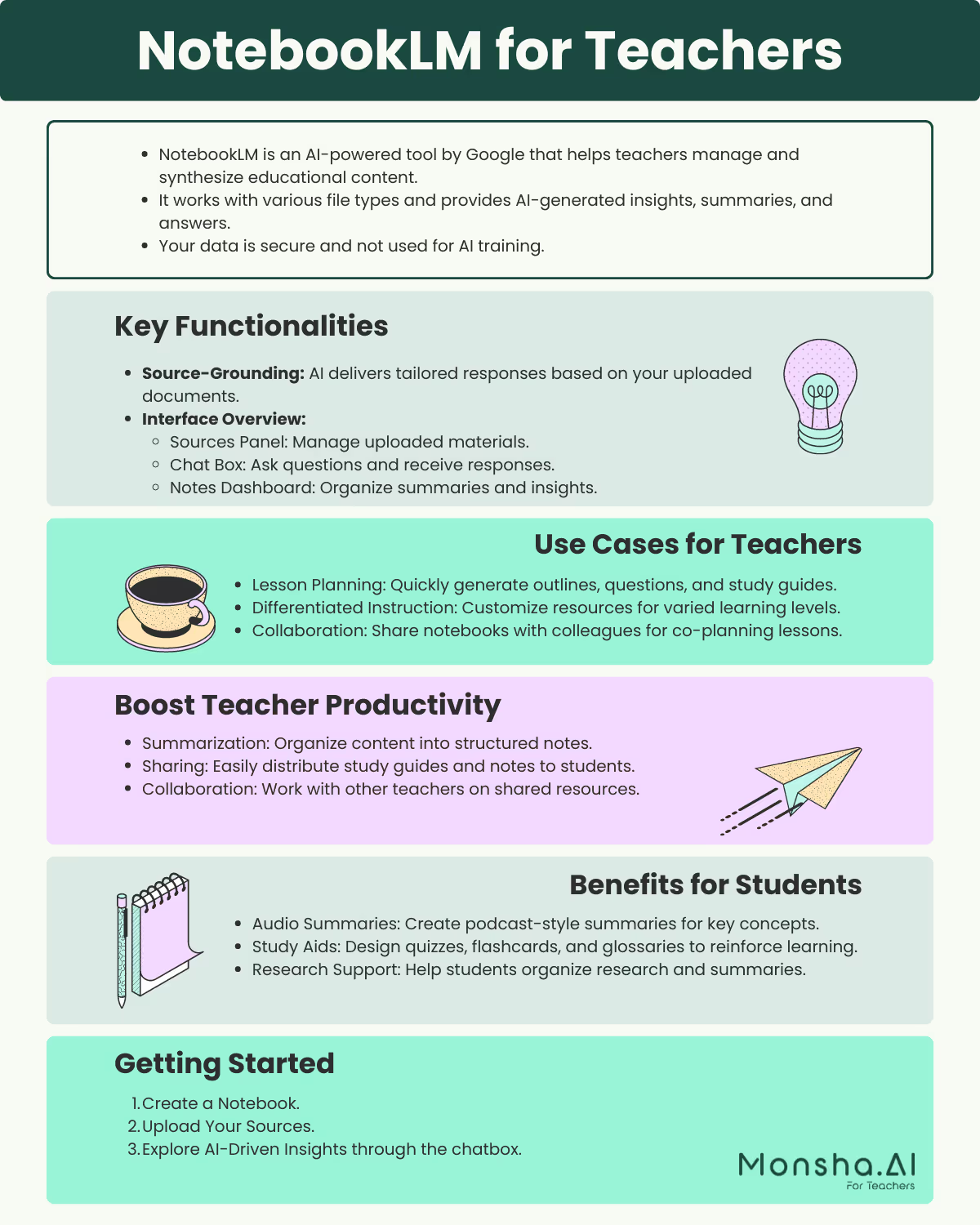

The Infographic card generates a single-image visual summary of your sources, downloadable as a PNG. Per Google's documentation, infographics are 18+ only, which matters in K-12: you can generate them for class use, but students cannot generate their own.

Pick from ten styles, or let NotebookLM auto-select:

In my opinion, Bento Grid is the one to try first for an end-of-unit review board. It scans cleanly when you project it.

Below is an infographic on Mastering NotebookLM in 2026:

.png)

The Data Tables card is the newest output. It's also the one most teachers will skip. It pulls structured tables out of your sources, the way a spreadsheet would. The Export to Sheets option in the three-dots menu drops each generated table into a new Google Sheet as its own tab. Per the December 2025 launch, this one is gated to Google AI Pro and Ultra, so teachers on the free tier won't see the option.

If you do have access, the use case worth your time is comparing how multiple sources treat the same topic. Picture three textbook chapters on cellular respiration side by side, with rows for each subtopic and columns for how each source covers it. That's the kind of synthesis you'd otherwise do by hand on a Sunday afternoon.

Three outputs, three narrow but real uses. The Briefing Doc is where I would start this week if you have never opened the Reports card. The other two are worth knowing about for the moments they fit, even if you do not reach for them often.

Each section above has walked through one feature at a time. The real time savings, though, show up when you stack two or three of these features into a workflow you run every week.

Below are five workflows worth borrowing. Each one names the inputs, the prompt, and roughly how long it takes. Feel free to adapt the subject and grade band to whatever you teach.

This is the workflow I would start with if you are new to building habits around NotebookLM. It is for a unit you are about to teach next week, and in about 45 minutes it will get you a unit handout, an audio overview, a slide deck, and a quiz.

What you need:

Once you have the three sources ready, here is the workflow:

Focus on the three big ideas in chapter 4 a 9th grader will struggle with. 12 minutes. Pitch at 9th grade reading level.

When you are done, you will have a unit handout, a podcast students can listen to before class, slides for Monday morning, and a quiz for Friday. About 45 minutes of work for the whole stack.

This one is for the new-grade or new-subject year. A teacher in r/HistoryTeachers shared the moment I have in mind:

"I am a new teacher in my first full year of teaching social studies, covering 7th-grade Hawaiian History and 8th-grade US History. I started partway through last year without a full curriculum."

What you need:

Once you have those sources uploaded, generate a Mind Map of the source set and click through the branches. The map is a great reading guide for you, before it is anything for your students. Each node fires a chat question with citations, so the map doubles as an easy way to ask questions of the unit before you have committed the reading time.

Then ask the chat:

Across these sources, identify five concepts a teacher new to this curriculum is likely to under-teach. For each, cite the source page where the concept lands. Note any place where two sources disagree on emphasis.

In my opinion this find-gaps prompt is the one that turns NotebookLM into a thought partner instead of a content generator. After about 30 minutes you will have a working sense of the unit and the spots that will need extra preparation.

Leah Cleary has shared a version of this as her weekly move, and it is a great one. The principle is one prompt, three outputs.

What you need:

Here is the prompt:

Take the lesson on [topic] from [source X] and produce three versions for students at three reading levels: a 4th grade scaffolded version, a grade-level version, and an extension version for students who finished early. Preserve the same content and learning targets across all three. Cut vocabulary above the grade band, or define it inline.

You will get back three versions of the same lesson, on the same content, from a single chat. Students reading below grade level get the same lesson their classmates do, just at a level they can access.

The three versions are first drafts, not finished worksheets. Edit them the way you would edit any first draft and send them on. (A deeper guide on differentiating AI-generated resources lives here.)

This one is for a project unit where the same source set runs across the class.

What you need:

A higher-ed teacher on r/Professors named the underlying pattern an "intelligent index": one notebook per assignment, holding everything the students might need to reference, with the chat as the entry point.

Once you push the notebook out, your students will see:

What you keep is a class working from one curated source set instead of ten different Google searches, plus the assurance that nothing they upload will surprise you, because the notebook is read-only.

This last one is for the panic. A teacher in r/notebooklm wrote this on their phone at 3 AM:

"It is the silent, deeply paralyzing fear that your upcoming morning lesson is going to put those VIP observers sitting in the back row straight into a irreversible coma."

It happens to all of us at some point. When it does, here is the fastest move:

Generate a 50-minute lesson plan for [grade] [subject] tomorrow on [topic]. Include a five-minute warm-up, twenty-five minutes of guided instruction, fifteen minutes of student practice, and a five-minute exit ticket aligned to standard [code]. Cite the source page each activity is grounded in.

The output will not be the lesson plan you would have written with a week of preparation. It will be a working first draft that you can edit by 7 AM and teach at 9. You will still want to read through the draft once before class, and you may want to swap an example or two for something closer to where your class actually is.

Hopefully one of these five workflows maps to a week you have coming up. As always, I would love to hear which one you reach for first if you decide to give one a try.

If your school uses Google Classroom, you can now create and assign NotebookLM notebooks directly from inside the Classwork tab. Google rolled this out in late September 2025, and it is a great update for K-12 teachers in particular. It means your students under 18 can use NotebookLM through Classroom even though the standalone NotebookLM signup is limited to 18 and over. The notebook lives inside the assignment. Your students engage with it through their Classroom login without needing their own NotebookLM account.

Here is how to set up a teacher-led notebook for your class:

The easiest setup is to leave the sharing mode on View only for any class notebook unless you have a specific reason to do something else. Your students get read-only access to whatever sources you have curated. They can chat with the sources with citations, listen to the audio overview, and study from the flashcards, but they cannot change the configuration. They also do not need access to the original source files, which is great for any unit packets you would rather not pass around in Drive.

There is a newer flow worth knowing about too. As of April 2026, higher-ed students who are 18 and over can create their own personal class notebooks from the Gemini tab in Classroom, grounded in the materials their professor has posted. The student path is Gemini tab → Personal class notebooks → Create class notebook. For K-12, the teacher-led flow above is still the way to go.

If the NotebookLM icon does not appear under Attach in your Classroom, you are certainly not alone. It usually means your school admin has not enabled the service yet. A teacher in the OER Project Teacher's Lounge put it plainly: "Turns out this is locked on the students' Chromebooks but I put in a change request with the powers that be." That is exactly the right move. The setting your admin needs is Gemini, NotebookLM, and Gemini in Classroom all set to On for the student OU. I would send your IT team that exact path in a quick email. In my experience, most schools turn it on within the week.

That should get you and your students set up cleanly inside Classroom. The next section covers what NotebookLM still gets wrong, and what to do when it does.

NotebookLM is good at staying inside your sources, and the source-grounding does most of the work that keeps it useful for teaching. It also gets four things wrong often enough they're worth naming. None of them require a different tool. Each has a verification move or workaround that takes about a minute, and any of them can save you the moment of finding out, mid-class, that the audio overview was built on a source NotebookLM never actually read.

The Sources panel section above named the symptom and walked through the source-preview check. There is a stronger version of the check that lives in the chat. Run this prompt once per new notebook:

For each source I have uploaded, give me a one-sentence summary of the actual content you can read. If you can only see metadata or partial content, say so explicitly.

If a source comes back as "I can see the title and a few headers" or "this appears to be an image", treat it as a failed upload and replace it before generating anything from the notebook.

The Sources panel section above named the per-source-sampling pattern: more sources stacked into one notebook means less data NotebookLM pulls from each one. Two recovery moves:

Asking NotebookLM for "NGSS-aligned" anything is the limit teachers tend to underestimate most. A science educator's r/edtech audit found that lesson content generated for 5th grade was pulling from middle-school-level material, and the NGSS code labels NotebookLM produced did not match what the actual sources cover. That's a hard one to catch if you're not looking for it. The output may have the standard codes in the right format on the page, but until you compare each one against the actual standard, the alignment is mostly cosmetic.

A great workaround is to upload the standards document itself as one of your sources, then check each generated lesson against the relevant standard yourself. Treat every "aligned to standard X" claim NotebookLM makes as a draft, not a finished alignment.

The 13% hallucination rate from the late-2025 study (cited in the orientation section) is the easy number to remember. Better than 40%, not solved. The harder failure mode is the one a teacher in the r/edtech audit thread named: "confident but incomplete is almost more dangerous than a flat-out hallucination."

The Chat panel section walked through the fix. When an answer is heading in front of students, click the citation, read the linked passage in full, and rewrite the framing yourself if the chat is adding a confidence the source does not have.

Catch each of these in advance and NotebookLM stays useful for the work it does well. If you keep running into the same limits and start to wonder whether the tool fits how you teach, this roundup of NotebookLM alternatives covers a few worth a look.

Two questions get raised at every PD on student-facing AI: can my students use it, and is the data I upload safe? The short answers for NotebookLM are yes through the Classroom integration the prior section covered, and yes on a school account with the caveats below.

If you sign in with a school Google Workspace for Education account (Fundamentals, Standard, or Plus), NotebookLM does not train its model on your data, and the workspace is covered under the Education terms, including FERPA and COPPA. If you sign in with a personal Gmail account, the protections are weaker. The account you sign in with sets the floor.

Even on a school account, a few categories deserve a second look:

Students can also upload your slides into their own NotebookLM notebooks to study from. A higher-ed teacher in r/Professors named the friction: "I'm not ok with my intellectual property being turned over to AI." There is no way to prevent it short of not posting the slides. The working move is to post a stripped-down student version separate from your teaching deck, and to add a redistribution clause to your syllabus.

Use a school account and keep identifiable student data out, and the access question stops being something you have to think about every time.

The questions teachers send me about NotebookLM tend to land somewhere in the body of this guide, but a handful come up often enough to answer here in two sentences each. Below are the ones that did not have a natural home upstream.

No. On a school Google Workspace for Education account, NotebookLM does not use your uploaded sources or chat conversations to train its models. On a personal Gmail the protections are weaker, so anything with student names or identifying detail should stay on your school login.

No, not yet. There is no folder-sync option, so when a Google Doc inside a notebook gets edited you have to refresh the source or re-add it to pick up the changes. This is one of the most-asked-for missing features in the community.

There is no built-in transcript download. The workaround is to download the audio file as an MP3 from the Studio panel and run it through a transcription tool. Or, in the same notebook, ask the chat to summarise what the overview covered. That is faster and keeps the citations attached.

Yes. Click Share in the top-right corner of any notebook and pick View only or Edit access. On a Workspace for Education account, sharing defaults to inside your school domain, which is the right setting for any notebook with student materials.

No, NotebookLM is a web-based product and needs an internet connection to load sources, generate Studio outputs, and run the chat. For a flight or a bad-Wi-Fi day, generate the audio overview on the ground and download the MP3 first.

In my opinion, no, and that is fine. NotebookLM is great at reading and asking questions of a defined set of sources, and it is not built to be a generalist lesson-plan generator the way some other AI tools are. A lot of teachers keep both and reach for whichever one fits the task in front of them.

That covers the residual questions. The conclusion below picks the one workflow worth trying this week.

The teachers who get real value out of NotebookLM tend to pick one workflow and build a habit around it before they try a second. The temptation, after a guide this long, is to try all five at once on Sunday and end up running none of them by Wednesday. The habit comes from running the same workflow for two or three weeks, then reaching for the next.

Pick the one that maps to a week you actually have coming up. If you have a unit landing next Monday, Sunday-night unit prep is the place to start. If you are walking into a new course cold, onboarding yourself to a new curriculum is going to save you the most time. If your students need the same lesson at three different reading levels and you do not have the bandwidth to write three drafts, differentiating one lesson three ways is yours.

Hopefully one of them is the one you've been meaning to try since the start of the school year. As always, I would love to hear which workflow you reach for first if you decide to give one a try.

[CTA BANNER: Monsha — placement: end of article, immediately after this conclusion. Generated separately by article-cta-banner-generator skill.]

.png)

Monsha Co-Founder & CEO

Hi, I’m Piash - one of the people behind Monsha. I spend most of my time talking to teachers, learning how they work, and building tools to make that easier. Here, I write about practical ways AI can support your workflow, new features we’re building, and stories from real educators using Monsha.

Join thousands of educators who use Monsha to plan courses, design units, build lessons, and create classroom-ready materials faster. Monsha brings AI-powered curriculum planning and resource creation into a simple workflow for teachers and schools.

Get started for free